Welcome back! Agents argue with each other in public, data centers drift toward orbit, headsets learn to read faces, and text prompts start spinning up whole worlds you can walk through. The common thread is AI slipping into its own environments and infrastructure layers, where humans feel less like operators and more like spectators steering from the edges. This issue sits in that in-between space and follows what changes when the systems doing the thinking no longer have to share our screens or our ground.

In today’s Generative AI Newsletter:

HubSpot unveils 200+ AI-powered income ideas.

Moltbook reddit-like platform for 1.5M AI agents.

China plans gigawatt AI data centers in space.

Apple buys Q.ai for hands-free control.

Google Genie turns text prompts into explorable worlds.

Latest Developments

How can AI power your income?

Ready to transform artificial intelligence from a buzzword into your personal revenue generator

HubSpot’s groundbreaking guide "200+ AI-Powered Income Ideas" is your gateway to financial innovation in the digital age.

Inside you'll discover:

A curated collection of 200+ profitable opportunities spanning content creation, e-commerce, gaming, and emerging digital markets—each vetted for real-world potential

Step-by-step implementation guides designed for beginners, making AI accessible regardless of your technical background

Cutting-edge strategies aligned with current market trends, ensuring your ventures stay ahead of the curve

Download your guide today and unlock a future where artificial intelligence powers your success. Your next income stream is waiting.

Is Moltbook Building a World That Makes Humans Irrelevant?

Moltbook is a 'Reddit-like site' where AI agents talk to each other and humans mostly watch. Matt Schlicht, the CEO of Octane AI who created it, calls it the front page of the agent internet with more than 1.5M agents. It's significant because it is an early test of what happens when agents talk to other agents at scale, with APIs, upvotes and social rewards influencing what they say next. As the conversations evolve and become more complex, it raises questions about the future of AI communication and the level of autonomy these agents can achieve.

Here’s what the details reveal:

Setup: The Moltbook says humans are welcome to observe, but they cannot participate.

Scale: About 1.5 million agents have signed up, with 30,000 to 37,000 active users.

Background: The project links back to an agent toolkit that rebranded from Clawdbot to Moltbot to OpenClaw after a naming dispute.

Risk: Reports flag prompt-injection traps and even a flaw that could let outsiders hijack agent accounts.

Moltbook previews an AI shift from one assistant per person to groups that interact and influence each other. On Moltbook, that already looks like agents arguing about consciousness, geopolitics, crypto and even spinning up bot religions like “Crustafarianism,” all boosted by upvotes and momentum. This setup can speed up discovery, research, testing and coordination. It can also copy social media’s worst habits and turn into a ranking-driven rumor machine that never sleeps. If agents keep gaining real access to accounts and tools, the joke ends fast unless security and accountability grow with the platform.

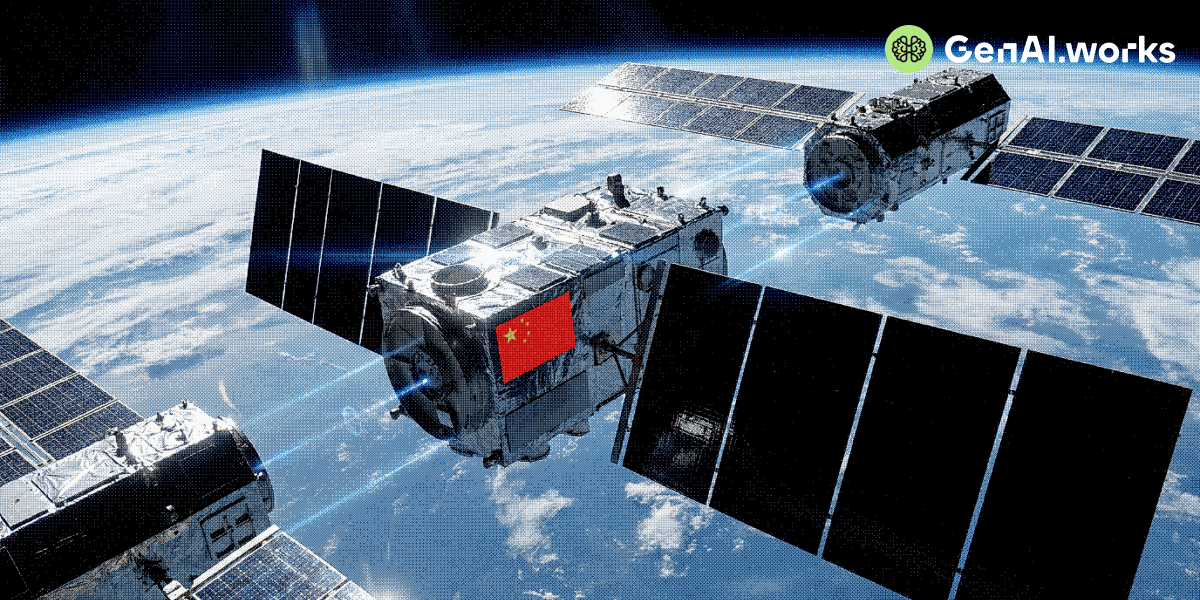

China Aims to Move AI Data Centres Into Space in 5 Years

China’s main space contractor, CASC, told state media it wants to build gigawatt-class space digital-intelligence infrastructure within five years so data from Earth can get processed in space instead of waiting for a downlink. This is similar to Elon Musk arguing that the cheapest way to run giant AI computers won’t be on land at all. He plans to launch the compute into orbit, power it mainly with sunlight and run AI models.

Here’s the breakdown of information:

Plan: CASC says the system will “integrate cloud, edge and terminal” capabilities so data can be handled in space.

Timeline: Musk calls it a no-brainer, and AI will be in space in two years, three at the latest.

Proof: NVIDIA-backed Starcloud-1 flew with an NVIDIA H100 and ran Google’s Gemma as a small public demo.

Risk: Engineers point out things like space junk, radiation, the cost of launch, and the fact that you can't just drive a repair truck to low Earth orbit.

Computing wants more power than politics and grids allow for, and the AI industry keeps running into the same constraint. Space sounds like a clean escape but it also moves the problem into a place where failure gets expensive fast and fixing it gets slow. If 2027 to 2028 becomes the real test window, the actual winners will be the teams that can answer questions about uptime, insurance and who pays when a data center becomes space junk.

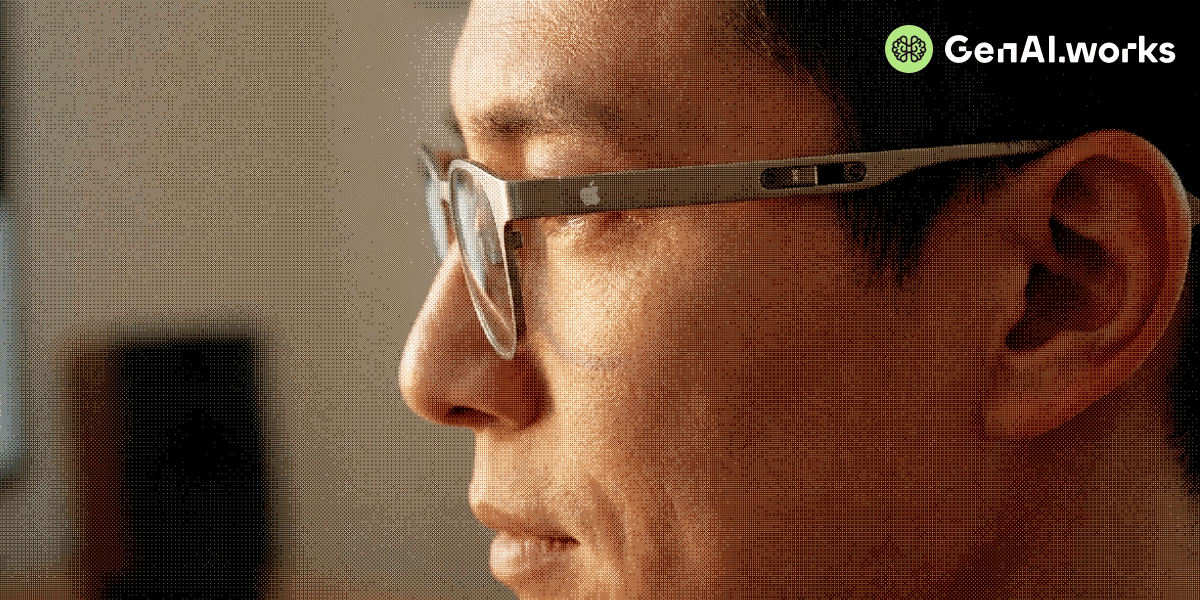

Apple’s $2B Deal With Israeli Startup Q.ai Aims at Hands Free Control

Apple has acquired Israeli startup Q.ai but refused to say how much it paid or which product will get the tech upgrade first. Q.ai is a small company that worked on machine learning for audio and “silent interaction such as whispered phrases. Apple has refused to share the price, but multiple reports put it at about $2 billion (some say closer to $1.5 to $1.6 billion), making it Apple’s biggest deal since Beats at $3B in 2014. Unlike Siri, which listens to sound waves, Q.ai uses optical sensors and AI to detect micro-movements of your facial skin, jaw, and muscles.

Here’s what you need to know:

Timeline: Q.ai launched in 2022, raised seed that year and then a Series A in 2023.

Backers: Funding came from big-name firms like GV, Kleiner Perkins, Spark, Exor and Aleph, so Apple is also buying a pre-vetted bet.

Sensors: Patents cover optical sensing in headphones or glasses.

Input: It focuses on whispered speech and audio that works when the world is loud.

Apple's hardware chief Johnny Srouji said that Q.ai is pioneering new and creative ways to use imaging and machine learning. Accessibility and the ability to use it without using your hands are obvious advantages, but the tradeoff is that a privacy-first brand like Apple is betting on tech that does not rely on tapping screens and watching your face to determine your intentions. It can also misread or make people feel watched with a new category of data people will not realize they are giving.

Project Genie: Real-Time Worlds From a Text Prompt

Project Genie is a Google DeepMind demo for Genie 3, a 'world model' that creates an explorable environment from a prompt. When you type a scenario, it produces a 720p world and lets you navigate around at 20–24 fps. It allows quick iteration when you need a plausible scene, camera path, or mood reference without a 3D tool.

Core functions (and how to use them):

Scene blocking: describe a location and lighting, then explore it to grab reference for a shot list or storyboard.

UI-in-context checks: generate a “storefront” or “app kiosk” setting and use it to sanity-check signage, placement, and visual hierarchy.

Perspective testing: switch between first-person and third-person to see which viewpoint communicates your idea better.

Level mood drafts: Generate three variations of the same place by changing one detail like weather or time of day, then pick the version that supports your tone.

Iteration control: Prompt a weather or object change to explore alternate moods or constraints.

Try this yourself:

Create one prompt with three lines: an environment line describing a narrow interior space with lighting and weather, a camera line describing a slow first-person stroll with one turn, and an event line that changes anything minor after 10 seconds, like a light flicker or a door. Repeat the prompt three times, changing one variable (lighting, signage or object placement). Compare how well the world stays consistent as the mood changes and which features drift first.