Welcome back! Google DeepMind is officially setting a date for AGI and hiring an economist to model the collapse of professional careers. You will also see how Microsoft is building its own transistor-heavy "utility" to lower the cost of digital brains and how Anthropic is turning your chat window into a command center for Slack and Figma. We are moving beyond simple chatbots into an era of reasoning agents and physical machines.

In today’s Generative AI Newsletter:

DeepMind founder predicts a 50% chance of AGI by 2028.

Microsoft debuts Maia 200 silicon to slash AI operational costs.

Anthropic launches interactive apps to control Figma and Slack inside Claude.

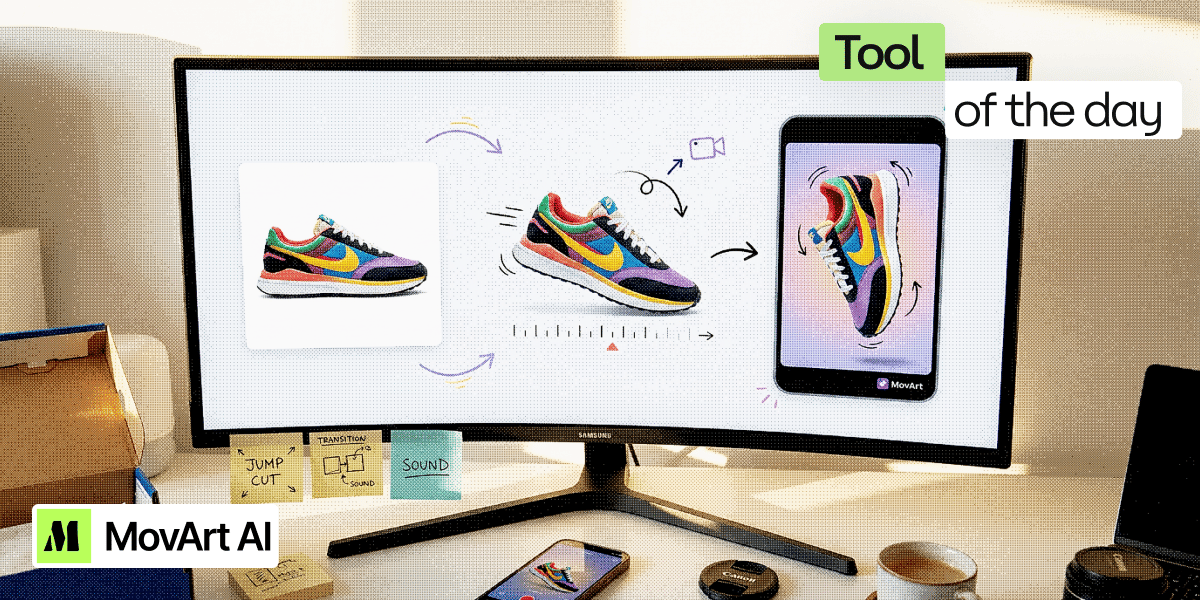

Convert product images into social-ready cinematic video.

Latest Developments

DeepMind Founder: AGI Is Officially On The Horizon

Google DeepMind co-founder Shane Legg believes the era of human-exclusive cognition is ending. In a recent interview, he confirmed that artificial general intelligence is now a measurable milestone on the research calendar, with a 50% chance of meeting the goal by 2028. This prediction is based on the current trajectory of scaling laws and internal benchmarks, forcing the lab to shift its focus to studying the "global fallout" of what Legg refers to as infinite digital labor.

Preparation for a post-AGI world:

Economic Preparation: Google is recruiting a Chief AGI Economist to model a world where human brainpower is no longer a scarce or expensive resource.

Performance Tiers: The laboratory uses a five-level framework to track progress, moving from "Emerging" (Level 1) to "Superhuman Virtuosos" (Level 5).

Cognitive Requirements: To meet the AGI threshold, a system must demonstrate the ability to perform any remote task currently handled by a human using a computer.

Physical Limits: Digital minds can grow vertically through massive energy scaling because they are not constrained by the biological limitations of the human brain.

Shane Legg is taking the debate over AGI from the ivory tower to the global labor ledger. The hiring of an economist suggests that DeepMind believes the cost of producing intelligence is about to plummet. If a system can eventually outperform 99% of skilled adults at any digital task, the concept of a professional career will collapse completely. We are essentially watching a company hire a financial architect to manage the potential end of its own current business model.

Special highlight from our network

Your pipeline is green, all tests pass… and a bug still hits production on a path nobody automated.

The problem is misleading coverage: teams automate the predictable flows, the risky ones “stay manual”, and your CI/CD pipeline can’t tell you what’s missing.

In this live session, “Your Green Pipeline Lies About Coverage: Get Real Coverage with Intent + AI,” Narain Muralidharan and Adarsh Anand from Testsigma show how AI agents connect what you meant to test with what actually ran, so you can see gaps before release and achieve coverage that matters.

Registrants also get 3 months of free access to Testsigma’s test management solution and AI agents.

📅 Feb 12, 2026 ⏰ 2:00 PM UTC / 7:00 AM PST

Save your spot and learn how to stop trusting a green pipeline that does not tell the whole story.

Microsoft Launches Maia 200 to Cut AI Inference Costs

Microsoft is currently attempting to break its expensive addiction to external hardware. The company revealed the Maia 200, a custom silicon workhorse designed specifically to handle the massive computation required for AI inference. Scaling models in the real world is primarily hindered by high operational costs. This new chip features over 100B transistors and is built to run the largest frontier models with efficiency gains over GPUs.

What the announcement revealed:

Performance Density: The hardware delivers 10 petaflops of 4-bit precision and 5 petaflops of 8-bit performance to accelerate modern workloads.

Competitive Benchmarks: Internal data shows the chip offers 3x the performance of third-generation Amazon Trainium chips and surpasses seventh-generation Google TPUs.

Superintelligence Support: The silicon currently powers the internal research of the Microsoft Superintelligence team and the daily operations of Copilot.

Open Development: Microsoft has released a software development kit to allow academics and frontier labs to optimize their specific models for the platform.

For complete supply chain control, Microsoft is relying on trading partners. Custom silicon is causing general-purpose hardware's diminishing returns. The company is lowering the rent on the digital brains it lends to the world by creating chips that are solely concerned with inference. The cost of electricity is more significant than the design if Microsoft can deliver faster, more affordable intelligence than its rivals. The software giant is becoming a power utility to safeguard profits in a world of limitless tokens.

Anthropic Introduces Interactive Apps Inside Claude

Anthropic is moving Claude from a text assistant to a visual operating system for the enterprise. The company launched interactive apps within the chatbot interface to execute tasks in Slack, Canva, and Figma. This setup relies on the Model Context Protocol (MCP), an open standard for how AI agents access data. These apps enable users to edit graphics through a native interface. For professional users, this change ensures a consistent workflow by removing the need to switch between tabs.

Here’s how Claude is integrating apps:

Interactive Interface: Users can now format Slack messages and edit Canva decks directly inside Claude instead of switching between browser tabs.

MCP Standards: The system uses the MCP Apps extension, allowing any server to deliver a visual interface to supporting AI products.

Agentic Foundation: Anthropic donated the protocol to the Linux Foundation, forming the Agentic AI Foundation with partners like OpenAI, Microsoft, and AWS.

Enterprise Focus: Initial support includes project management tools like Asana and monday.com, with a Salesforce integration expected soon.

Anthropic is currently using open-source standards to build a moat around the professional desktop. By defining how agents interact with software, they are attempting to solve the irregularity problem that makes current AI agents feel clunky. This move toward an "everything app" model shows that the future of software lies in a single chat-based command center rather than a collection of isolated icons. While the convenience is obvious, the reliance on MCP means the security of the entire ecosystem now rests on a shared open standard.

Turn Scripts and Product Images Into Social-Ready Content

MovArt is an AI creative workspace built for businesses that need usable images and short videos without running a full production process. It focuses on helping teams move from rough ideas to assets that can actually be posted, tested, or launched, especially for social media and ads.

Core functions (and how to use them):

Text-to-Video Creation: Simply write a hook or scene to generate video clips featuring dynamic camera movement and cinematic lighting, ideal for rapid concept testing.

Image-to-Video Animation: Upload static product photos to apply controlled motion, such as subtle zooms or rotations, bringing your brand assets to life.

Prompt-Based Editing: Skip the complex timelines; adjust transitions, mood, and pacing using natural language commands.

Ad-Focused Image Generation: Design still images specifically for social feeds, emphasizing the contrast and composition needed to stand out on mobile screens.

Style Routing Across Models: MovArt intelligently selects the best AI models for your specific visual style to ensure brand consistency across all formats.

Try This Yourself:

Take one product image, generate an animated version, and create a still variation. Compare which format communicates your value proposition most effectively, then refine the winner.